Model Context Protocol (MCP): the missing interoperability layer for autonomous AI workflows

Model Context Protocol (MCP) is an open interchange standard that standardizes how LLM-powered agents discover capabilities, fetch contextual data, and invoke actions across systems. Think of MCP as a universal connector – a common runtime contract that lets agents treat external tools, data stores, and instruction templates as first-class, discoverable resources instead of brittle prompt snippets.

The integration problem

Before protocolized context, integrating an agent with external services was manual and fragile:

- Agents could be instructed to call an API, but each agent needed bespoke guidance on that API’s auth, parameters, and error semantics.

- Tool knowledge lived in prompts or orchestration glue, so tools weren’t reusable across agents.

- Builders using orchestration platforms like n8n or LangGraph had to re-encode tool behavior per agent, producing non-modular, hard-to-test flows.

The result: integrations were one-offs, plans were brittle, and agents could not reliably compose or hand off work to each other.

What MCP is (concise definition)

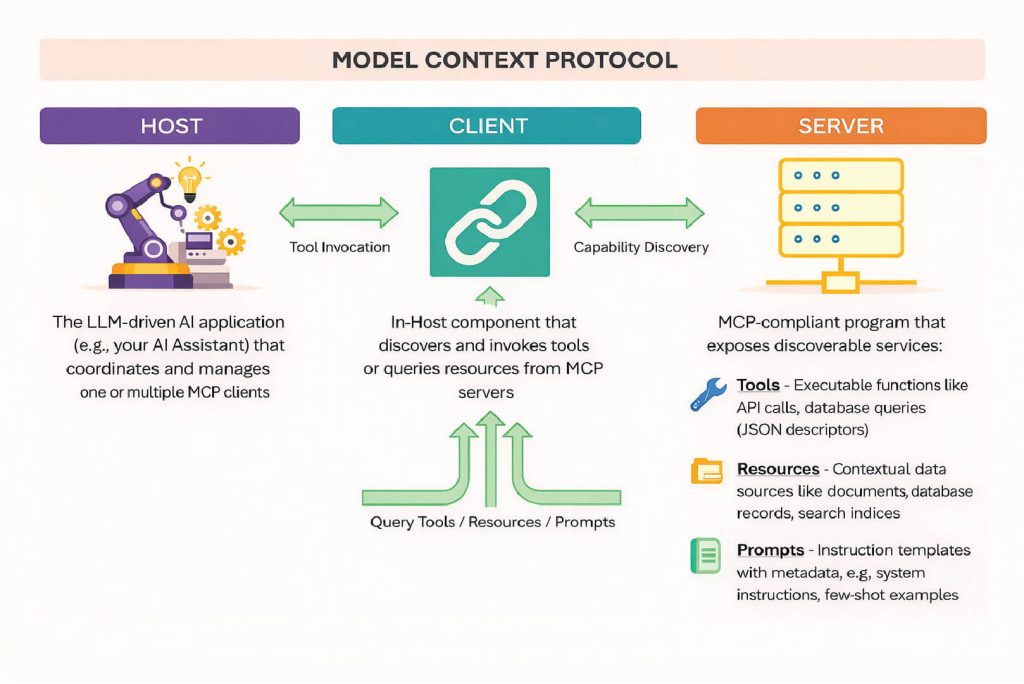

MCP defines a machine-readable, schema-driven contract for three roles:

- Host — the LLM application orchestrating one or more MCP clients (the runtime that runs planner/agent loops).

- Client — an in-process component inside the Host that consumes server-provided descriptors (tools, resources, prompts) and executes them under the agent’s control.

- Server — the authoritative provider of capabilities and context: a registry of executable tools, indexed resources (files, DB rows, search), and canonical prompt templates.

MCP standardizes metadata for each capability: auth requirements, input/output schemas, usage examples, and runtime hints. That lets clients perform discovery and invoke tools without ad-hoc prompt engineering.

(The MCP specification and exemplar server implementations are documented on the official MCP site.) modelcontextprotocol.io

Core building blocks (what the protocol exposes)

- Tool descriptors — machine-readable definitions that include: name, purpose, I/O schema, sample calls, error semantics, required scopes/credentials, and idempotency notes.

- Resource APIs — standardized endpoints to fetch contextual data (documents, search results, DB snippets, cached embeddings) with provenance and freshness metadata.

- Prompt schema — structured instruction templates that pair task intent, role framing, and tool hints so models receive consistent grounding across Hosts.

- Execution & telemetry — a canonical log of tool invocations, responses, and artifacts to enable replay, auditing, and human review.

What MCP enables (practical capabilities)

- Discoverable tooling: agents can query “what can I call?” and receive rich metadata (auth, example payloads, input schema).

- Safe composition: a planner can synthesize multi-step plans that call heterogeneous tools (search → transform → persist) with validated schemas.

- Shared memory and context: Servers surface summaries, embeddings, and prior outputs so agents reason over the same history.

- Pluggable auth: MCP specifies how credential material is surfaced to the Host (token scopes, ephemeral credentials), reducing secret-leakage risks.

- Reusability: once a tool is described by an MCP server, any MCP-aware agent can integrate it without per-agent prompt surgery.

Concrete scenario: a GTM summary agent

Imagine a product team wants a weekly GTM briefing that aggregates Notes in Notion and tasks in Asana:

- Without MCP: the agent must be hand-taught each API’s endpoints, auth, and data model; tool logic is embedded in prompts or host glue.

- With MCP: the Host queries the Notion and Asana MCP servers and receives tool descriptors (list pages, query tasks), sample payloads, and schema contracts. The agent plans: fetch recent pages → extract action items → correlate with Asana tasks → write a summary back to Notion. Because the tools are described uniformly, the same plan runs reliably across different agents.

(Example adopters and integrations have been demonstrated by several teams including Betaworks that prototype cross-app automation using MCP-style registries, and common productivity platforms like Notion and Asana are natural fit points.)

Developer experience: quickstart and server primitives

Reference implementations provide:

- A tool registry with metadata endpoints (name, schema, examples).

- Resource storage for files and embeddings (queryable with provenance).

- Prompt packs (system + few-shot templates) that Hosts can adopt to maintain consistent model behavior.

- Execution logs for observability and human-in-the-loop interventions.

A prototypical local server can be deployed in minutes and is useful for experimenting with memory types, tool schemas, and execution auditing.

Why MCP matters (implications for AI systems)

- Modularity at scale — tool creators publish schema-first descriptors; agent creators consume them. No more brittle copy-paste of API usage into prompts.

- Safer automation — explicit I/O contracts and auth scopes reduce surprise side effects and make verification easier.

- Interoperable ecosystems — MCP lets multiple agents and hosts collaborate around a single source of truth (tool and resource metadata), enabling shared memory and multi-agent coordination.

- Faster iteration — teams can evolve tools independently while keeping agent behavior stable via the protocol’s compatibility constraints.

Closing note

MCP reframes integrations from prompt craft to interface design: define your tools, surface their intent and schema, and let agents discover and compose them reliably. For teams building agentic systems that must scale, interoperate, and be auditable, MCP is a practical interoperability layer — the “USB-C” that makes AI workflows plug-and-play.

Your Plan. Your Value. Your Growth.

Your business is different – and the pricing should reflect that.

Let’s build a plan that matches your goals, maximizes ROI, and scales with your success.